JSON Prompts Aren’t a Performance Hack

JSON doesn’t make models smarter, faster, or cheaper—it just makes requirements easier to specify and reuse. The real lever is clarity: intent, constraints, and tight control of output length and tokens.

Posted by

Related reading

The AI Draft → Publish Pipeline: From Raw Output to Real Authority

AI can generate drafts in seconds—but authority requires judgment. This tactical pipeline shows how to move from raw AI output to structured, edited, optimized, and ethically published work without lowering your standards.

How to Add Original Thinking to an AI Draft (So It Doesn’t Sound Like Everyone Else)

Raw ChatGPT drafts are clean and coherent—but often generic. This guide shows how to insert stance, lived experience, trade-offs, and consequences into AI-generated content so it builds authority instead of blending into the average internet voice.

Why AI Drafts Sound Generic (And How to Make Them Sound Like You)

ChatGPT defaults to safe, average phrasing—clear but forgettable. This guide breaks down why AI drafts feel generic and gives you a practical refinement workflow to inject constraints, specificity, judgment, and compression before you publish.

JSON Prompts Don’t Make Models Smarter (or Cheaper). They Just Make You More Organized.

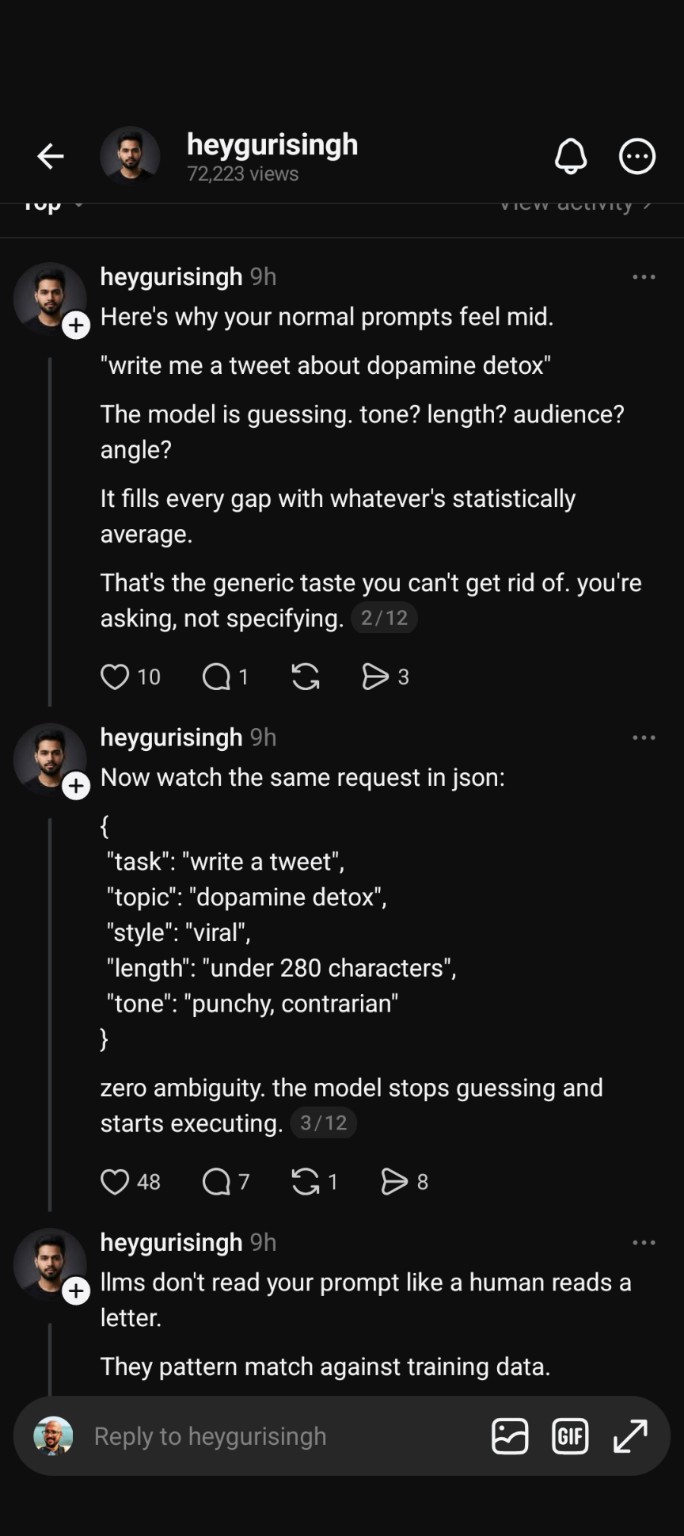

Every few weeks, a post makes the rounds claiming some version of: “If you prompt in JSON, the model performs better.” Usually it comes packaged as a productivity hack—structure your prompt like code and the AI will finally behave.

Every few weeks, a post makes the rounds claiming some version of: “If you prompt in JSON, the model performs better.” Usually it comes packaged as a productivity hack—structure your prompt like code and the AI will finally behave.

That claim is partly true in the way most internet advice is partly true: it points at a real phenomenon (specific instructions produce better outputs), then attributes it to the wrong cause (JSON itself), and slides into a performance myth (faster, cheaper, “less guessing”).

Let’s separate what’s real from what’s marketing.

What those posts get right: specificity beats vibes

Models respond better when you remove ambiguity.

If you define the tone, audience, length, and format, you’re not “unlocking hidden intelligence.” You’re doing something simpler: preventing the model from having to invent missing requirements.

A prompt like:

- “Write a tweet about dopamine detox.”

is wide open. Should it be funny? clinical? contrarian? inspirational? 50 words or 250? meant for 19-year-old biohackers or therapists?

So the model does what it always does when you don’t specify: it defaults to the most common, safest pattern. That’s why vague prompts often feel like the world’s most average intern wrote them.

Meanwhile, a prompt like:

- “Write a punchy, contrarian tweet under 280 characters about dopamine detox. No hashtags. Slightly sarcastic.”

produces something noticeably better—not because of any magic format, but because you constrained the output space.

This is the core truth behind “JSON prompting works.” Specificity works.

The misleading part: JSON isn’t special

The model doesn’t become smarter because you wrapped your instructions in braces.

You can express the same constraints in plain English and get the same quality:

- Plain English: “Write a punchy, contrarian, viral-style tweet under 280 characters about dopamine detox.”

- JSON-ish:

{"task":"tweet","tone":"punchy","stance":"contrarian","max_chars":280,"topic":"dopamine detox"}

Both communicate the same intent. The second one just looks more technical, which makes it easier to believe it’s a cheat code.

The real driver is: clear requirements. JSON is merely one way to package them.

“The model is just guessing randomly” is also wrong

Another common framing is that without structure, the model is “randomly guessing,” and JSON stops it from doing that.

That’s not how this works.

Yes, models generate probabilistically. But they’re not rolling dice in the dark. They’re using patterns learned during training plus the constraints you give them in the prompt context.

When you don’t specify dimensions (tone, scope, audience, format), the model fills them with defaults that are statistically common and broadly acceptable. It’s not clueless; it’s conservative.

So the issue isn’t “randomness.” The issue is underspecification.

Image credit: Wikimedia Commons

Over-structuring can backfire (especially for creative work)

There’s also a point where structure becomes a cage.

If you cram a creative task into an overly rigid schema—ten fields of tone parameters, multiple nested constraints, a “voice_profile” object, and strict section counts—you can end up with writing that feels robotic. Not because JSON is bad, but because you’re forcing the model to satisfy the form instead of the outcome.

This shows up as:

- unnatural phrasing (because it’s trying to hit every checkbox)

- blandness (because it’s avoiding violating constraints)

- “template smell” (everything comes out with the same rhythm)

Structure is a tool. The goal is still good output, not a perfectly filled configuration file.

The app-developer question: will JSON prompting run faster and use fewer tokens?

No.

If anything, JSON often costs more.

1) Tokens determine cost (not the aesthetic of the prompt)

You pay for tokens in and tokens out. JSON usually includes extra characters and repeated keys:

- quotes

- braces

- field names like

"tone":,"audience":,"format":

Those are tokens too.

So if your only goal is cost efficiency, JSON is not a discount coupon. It can be slightly more expensive than a tight English prompt that conveys the same constraints.

2) Speed is mostly tokens + model + latency

Runtime is influenced by:

- how many tokens the model has to process and generate

- which model you’re using (bigger models are slower)

- network latency and system overhead

- output length

The speed difference between “punchy tweet under 280 chars” and a JSON-wrapped version is usually negligible in real-world apps. But if you’re trying to optimize at scale, the only reliable lever is: reduce tokens and reduce output length (or use a smaller model).

3) JSON helps reliability, not performance

This is where JSON actually shines—just not in the way the viral posts claim.

JSON can be useful because it’s:

- easier to generate programmatically (you can build it from UI fields)

- more consistent across a team (less “prompt drift”)

- better for multi-step workflows (agents, pipelines, tool calls)

- harder to forget key parameters (because your schema reminds you)

That’s engineering convenience. Not magic.

A better mental model: intent + constraints, not hacks

If you want consistently good outputs in an app, think like this:

-

What is the intent?

Are you trying to persuade, summarize, classify, brainstorm, rewrite, extract? -

What constraints actually matter?

Tone, audience, length, reading level, format, allowed/forbidden elements. -

What can be safely left to defaults?

Not everything needs a field. Over-constraining makes outputs stiff.

If you do this, you’ll get 90% of the “JSON prompting” benefit while staying flexible.

If your goal is efficiency at scale, do these things instead

When the real concern is cost and speed (not prompt aesthetics), the playbook is boring but effective:

Keep prompts short

Strip ceremony. Don’t repeat what the model already knows. Don’t wrap every request in a manifesto.

Constrain output length aggressively

This is the biggest silent cost driver because output tokens are often the bulk of the bill.

Use constraints like:

- “Max 120 words.”

- “Return 5 bullets only.”

- “Return JSON only.” (This is about output parseability, not intelligence.)

Use structured outputs for structured tasks

Classification, extraction, scoring, tagging—these benefit from predictable formatting. JSON is great here because your app can parse it reliably.

Use cheaper models when the task allows it

Not every step needs the flagship model. Routing is underrated: lightweight model for extraction and formatting, stronger model for high-stakes generation.

Avoid verbosity as a default

Many models “helpfully” elaborate unless you tell them not to. If you’re building an app, verbosity is not friendliness; it’s wasted tokens.

So when should you use JSON prompts?

Use JSON when it helps you:

- you’re assembling prompts dynamically from variables

- multiple devs need a consistent interface

- you’re running multi-step workflows where missing a parameter breaks a chain

- you need outputs that are easy to parse and validate

Don’t use JSON because you think it makes the model “stop guessing,” become “smarter,” or run “faster.”

That’s not what’s happening.

Conclusion

JSON prompting is mostly an organizational choice, not a performance hack. Better outputs come from better constraints—tone, audience, length, and clear intent—whether you write them in English or wrap them in braces. If you’re optimizing an app for speed and cost, focus on token count, output length, and model selection, because that’s what actually moves the needle.