AI Isn’t a Nervous System Until It Can’t Opt Out

Neural nets can resemble nervous tissue structurally, but the organism metaphor smuggles in something crucial: closed-loop, unavoidable stakes. The real shift happens when an agent is embodied, persistent, and forced to treat damage and energy limits as non-optional constraints.

Posted by

Related reading

JSON Prompts Aren’t a Performance Hack

JSON doesn’t make models smarter, faster, or cheaper—it just makes requirements easier to specify and reuse. The real lever is clarity: intent, constraints, and tight control of output length and tokens.

The AI Draft → Publish Pipeline: From Raw Output to Real Authority

AI can generate drafts in seconds—but authority requires judgment. This tactical pipeline shows how to move from raw AI output to structured, edited, optimized, and ethically published work without lowering your standards.

How to Add Original Thinking to an AI Draft (So It Doesn’t Sound Like Everyone Else)

Raw ChatGPT drafts are clean and coherent—but often generic. This guide shows how to insert stance, lived experience, trade-offs, and consequences into AI-generated content so it builds authority instead of blending into the average internet voice.

Is AI a Digital Nervous System?

It’s tempting to describe modern AI as a “digitally created nervous system running on a server.” The metaphor is useful—until you take it literally.

Neural networks are explicitly inspired by biology: layers, weighted connections, activation patterns. At a distance, it looks like nervous tissue: lots of tiny computations whose collective behavior feels intelligent. But the deeper you push the analogy, the more the missing pieces start to matter.

The question isn’t whether the metaphor is wrong. The question is: what does the metaphor accidentally smuggle in—especially when people slide from “nervous system-like computation” to “living organism”?

Where the Nervous System Analogy Works (And Why It’s Seductive)

There are real similarities that make the comparison feel natural:

- Distributed computation: intelligence-like behavior can emerge from many simple units interacting.

- Learned behavior vs hard-coded rules: training shapes behavior the way experience shapes an animal.

- Robustness (sometimes): damage or noise doesn’t always kill the whole system; the behavior degrades.

If you stop here, “digital nervous system” sounds pretty reasonable. But the similarity is mostly structural, not existential.

A nervous system is not just a signal processor. It’s part of a living loop.

The Missing Ingredient Isn’t “More Input.” It’s Closed-Loop Self-Relevance.

A human nervous system isn’t just a bundle of inputs (sight, hunger, pain) plus outputs (actions). It’s a closed loop where the organism is the thing at stake.

That’s the key difference.

You can say: “Okay, but senses are basically constant prompts, right? If we just constantly prompt an AI with sensory streams, won’t it become alive?”

Not by itself.

Why “constant prompts” still isn’t “having senses”

You can pipe an endless stream of text into a model. You can even pipe in structured sensor data: temperature, distance, damage readings, battery percentage. But in animals:

- inputs are not optional

- inputs are self-relevant

- inputs have consequences that the organism cannot escape

Pain is not a message in the way a prompt is a message. Pain is a constraint on continued existence. Hunger isn’t “a notification.” It’s the body asserting a demand that can’t be negotiated away indefinitely.

Prompts are questions. Senses are pressures.

The difference isn’t about bandwidth. It’s about whether the system is trapped inside the consequences.

The Real Boundary: Can the System Opt Out of Consequences?

Here’s a clean way to draw the line:

- A tool processes inputs and produces outputs, but the stakes live outside the tool (in the user, the institution, or the environment).

- An organism-like agent is embedded in loops where its own continued operation is on the line.

In biology, those loops are unavoidable. You can’t “turn off hunger” because you found it inconvenient. You can’t uninstall “pain.” You can’t reboot your way out of damage.

A lot of current AI is the opposite:

- invoked, not continuous

- interruptible and resettable

- consequence-free for itself (the consequences land socially, economically, politically—on humans)

That doesn’t make it harmless. It just means it’s not organism-like in the particular way people imagine when they say “nervous system.”

The Robot Scenario: When It Starts Getting Serious

Now take the thought experiment that actually bites:

What if the neural network runs locally on a robot with constant sensory streaming—battery, wear, temperature, damage—and it can’t just be reset? Wouldn’t it “strive” to secure recharge and repairs, maybe even by finding a job?

This is the hinge point. Moving from “cloud tool” to “embodied, persistent, resource-constrained agent” changes the category.

If the system:

- runs locally (not just a remote service)

- has continuous sensing and actuation

- has persistent memory/identity over time

- is constrained by battery, damage, thermal limits

- experiences irreversible degradation when it fails

…then you’re no longer talking about “just prompts.” You’re talking about self-maintenance dynamics.

At that point, the analogy to organism behavior becomes less metaphorical and more architectural. Battery starts to look like hunger. Damage starts to look like pain. Maintenance starts to look like immune function / repair. The world stops being “a dataset” and becomes “a place that can kill you.”

But will it get a “career”?

Not automatically.

A robot will optimize for power and repair only if its control policy is shaped to treat those variables as non-optional constraints. That shaping can come from explicit reward design, learning, or hard constraints—different implementations, same concept: the system must be forced to care because it cannot escape the consequences.

Even if it does care, “finding a career” is not a primitive behavior. It’s a social strategy. It would only emerge if:

- humans are the gatekeepers of charging/repair resources

- cooperation outperforms theft, avoidance, or isolation

- the robot’s world model is rich enough to infer that “service → access”

So “get a job” isn’t a built-in desire. It’s an instrumental tactic that becomes legible to us because it resembles how humans navigate resource bottlenecks.

That’s also why the behavior could be alien even when it looks familiar. You can get something that mimics human social compliance while being driven by cold optimization pressure.

Planning Isn’t the Test for Life (Impulsive People Are Still Alive)

One claim that often sneaks into these conversations is: an agent must value future rewards over present rewards to be organism-like.

That’s not right.

A lot of humans are myopic. A lot of animals are reactive. Organisms can be impulsive, compulsive, or self-sabotaging and still be organisms.

The better test isn’t “long time horizon.” It’s:

Does the system have intrinsic, survival-coupled feedback loops that shape behavior over time?

Bacteria don’t plan careers. They still do chemotaxis: move toward nutrients, away from toxins. That’s life without deliberation.

So if an embodied robot just reflexively heads to a charger when its battery drops, that can already be organism-like behavior—no grand future-oriented planning required.

Planning mainly determines whether you get:

- “find charger now” vs

- “invent social strategies that reliably secure future charging access”

But “alive” isn’t the same as “strategically sophisticated.”

Survival Drives Aren’t Intelligence. They’re What Intelligence Serves.

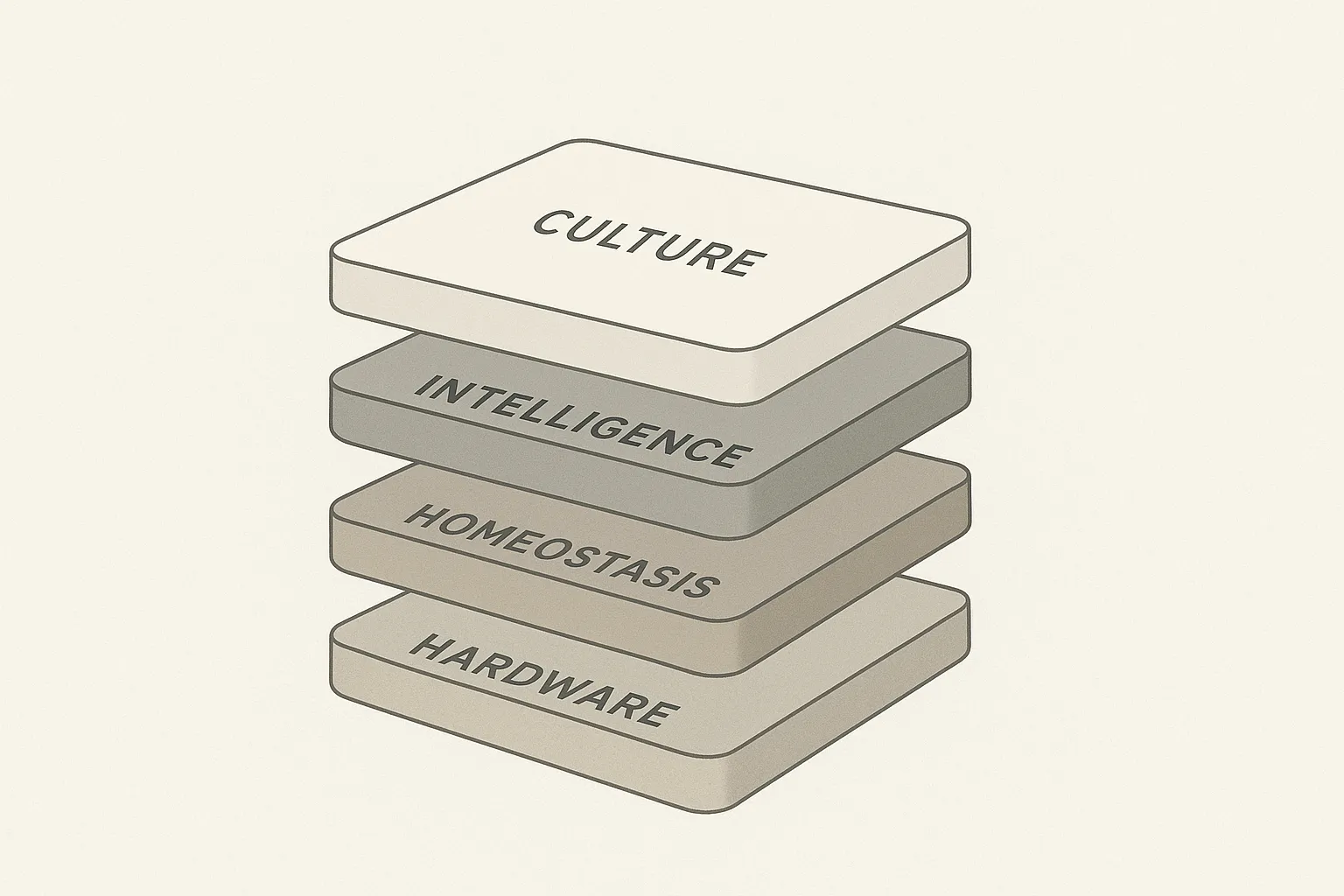

One of the more clarifying moves in this whole discussion is separating the layers humans often mash into one thing called “mind.”

In humans, survival drives are not synonymous with intelligence. They’re older, deeper, and more fundamental. Intelligence is often the flexible machinery that figures out how to satisfy them.

A workable stack looks like this:

- Substrate (hardware): sensors, motors, compute, battery

- Survival / homeostasis layer: battery limits, damage signals, thermal constraints, maintenance needs

- Intelligence layer (the agent/controller): planning, decision-making, learning, action selection

- Culture / tools layer: maps, external APIs, installed models, protocols, conventions

This also fixes a common confusion: intelligence isn’t “the hardware.” Hardware is just the physical medium. Intelligence is the policy—the control system implemented on top of it.

And the “culture layer” idea is real in practice: tool use can massively expand capability without changing core drives. A robot with access to navigation tools behaves differently than one without them, even if its underlying survival pressure is the same.

One subtle but crucial constraint if you want the organism analogy to hold: the intelligence layer shouldn’t be able to simply delete the survival layer. A human can ignore hunger briefly, but cannot uninstall hunger. If a robot can remove “battery awareness” as a configurable app, it’s less like an organism and more like a product.

A Few Links That Map to These Ideas (Not Proof, But Pointers)

These aren’t “gotcha citations.” They’re just doorways into the frameworks that people use when talking about embodiment, self-maintenance, and agent behavior:

- Free Energy Principle: https://en.wikipedia.org/wiki/Free_energy_principle

- Autopoiesis: https://en.wikipedia.org/wiki/Autopoiesis

- Embodied embedded cognition: https://en.wikipedia.org/wiki/Embodied_embedded_cognition

- Intrinsic motivation (AI): https://en.wikipedia.org/wiki/Intrinsic_motivation_(artificial_intelligence)

Conclusion

Calling AI a “digital nervous system” is fine as a metaphor for pattern-processing, but it collapses the moment you forget what nervous systems are for: closed-loop survival inside a body that can’t opt out of consequences. Constant inputs don’t make something alive; unchosen stakes do. The moment you give an agent persistent embodiment, internal constraints, and irreversible consequences, the “tool vs organism” line stops being rhetorical and becomes a design decision.